Chirag Varun Shukla

Hello there!

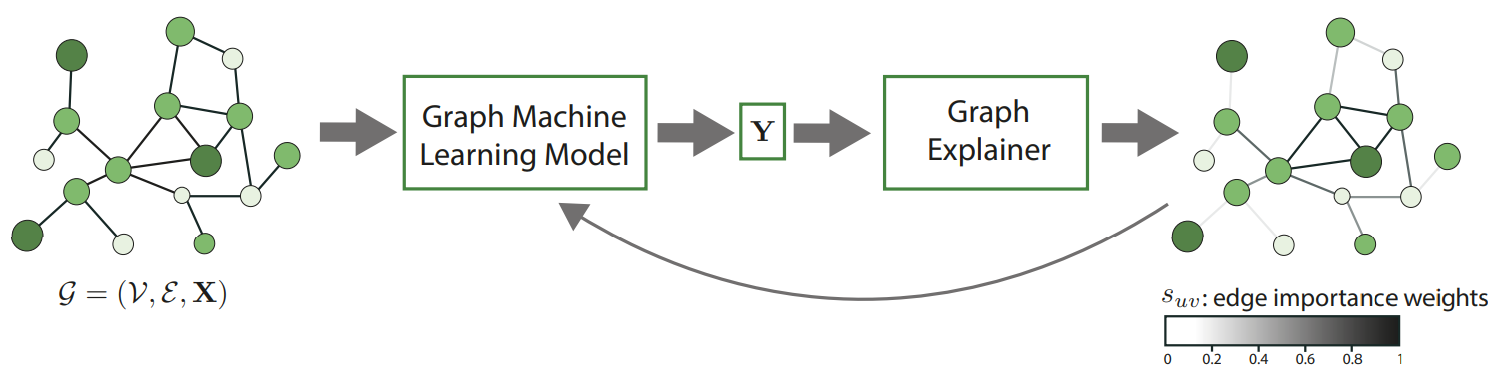

I am a PhD student at the Bavarian AI Chair for Mathematical Foundations of Artificial Intelligence, LMU Munich, supervised by Prof. Dr. Gitta Kutyniok. My research focuses on the interactions between Interpretability and Graph Neural Networks for theoretically-rigorous reliability of GNNs. I currently work on building mathematical foundations for post-hoc explainers as well as self-interpretable models for molecules as part of the MaGriDo project.

I graduated with an M.Sc in Mathematics in 2019, with a focus on graph theory and fluid mechanics, and with a B.Sc in Physics, Chemistry, Mathematics in 2017.

Interests

Interpretability

Graph Neural Networks

Molecular Machine Learning

Education

PhD in Mathematics, 2021-Present

LMU Munich, Germany

M.Sc in Mathematics, 2017-2019

Christ University, India

B.Sc in Physics, Chemistry, Mathematics, 2014-2017

Christ University, India

Publications

Towards Training GNNs using Explanation Directed Message Passing

Valentina Giunchiglia*,Chirag Varun Shukla*, Guadalupe Gonzalez, Chirag Agarwal